When Security Research Undermines Security

On new PhotoDNA white-box attacks and who benefits

Last week, researchers from the Computer Security and Industrial Cryptography (COSIC) research group headed by Bart Preneel at KU Leuven published “White-Box Attacks on PhotoDNA Perceptual Hash Function,” describing how to evade detection with known child sexual abuse material (CSAM), and how to trigger a false alarm that would send innocent images to human review. These cryptographic attacks affect current law enforcement practices, undermining ongoing EU child protection efforts. Who does that?

Lead author Maxime Deryck, former COSIC intern and Ghent University student, is a few years out from an engineering thesis on Fourier limits. Second author Diane Leblanc-Albarel is a COSIC postdoctoral researcher. Preneel, the group’s mentor, is a world leader in cryptography, a field whose dominant academic norm is anti-surveillance, epitomized in the 2014 IACR (International Association for Cryptologic Research) Copenhagen Resolution. The quieter other half of this professional conversation happens in corporate and state settings like Google and the NSA, where cryptographers working on such attacks are less likely to publish them for obvious reasons. What did they do?

The researchers reverse-engineered the internal design of PhotoDNA — the perceptual hash function currently used by Google, Meta, and NCMEC (the National Center for Missing and Exploited Children) to match circulating images against a database of known CSAM — and confirmed they had successfully done so by testing 1,000 such images against the real implementation (standard practice). This establishes that anyone with a laptop and a few minutes can add a small border to an image and circulate CSAM on common platforms like Gmail and Facebook without detection. The most computationally demanding attacks take under ten minutes on a personal laptop. The simplest takes seconds.

Privacy activist and former Pirate Party MEP Patrick Breyer issued a press release headlined “End of Chat Control: Paving the Way for Genuine Child Protection!” calling the paper “the final nail in the coffin” for current detection systems. This reflects a misunderstanding of what the paper does technically and consequently what it means politically.

Politically: Publishing these results right now, in the middle of live EU negotiations (e.g., ongoing trilogues) over CSAM scanning, with the exact methods included, hands a technical roadmap to the people those detection systems are designed to stop. Breyer has now amplified that roadmap to his entire audience. Proponents of mass surveillance often claim that their civil libertarian opponents are helping criminals; in this case, it actually appears to be true. And it was completely unnecessary.

I’ve been writing about the math of mass CSAM screening since 2023. I have argued against Chat Control 2.0 — mass client-side scanning of end-to-end encrypted communications — for years on statistical grounds. Last week, I posted a preprint of my paper showing that such mass end-to-end encrypted communications scanning for unknown CSAM would massively backfire, harming the very child safety interest it is ostensibly intended to advance (“When Mass Security Screening Backfires: Causal Modeling for Complex Equilibrium Systems”).

So you can be against mass scanning and for child safety at the same time. I am. These researchers may believe that they are, too. I am not sure they are right.

When my primary case study of mass screenings for low-prevalence problems was polygraphs, I didn’t work on countermeasures for the simple reason that I didn’t want to help the baddies. On one hand, different people’s moral intuitions will vary in valid and socially useful ways about what research is ethical. On the other hand, actively doing science to undermine current police practices is not the most obvious public service research agenda.

I believe that senior scientists have a special responsibility to mentor young scientists in ways that honor our moral duty as researchers to do no harm. One might seriously question whether Preneel has upheld that responsibility in this case.

I also believe that corporations have a responsibility to obey the law including not knowingly transmitting illegal material like CSAM on their servers. They also have a responsibility to not actively undermine ongoing police investigative work to detect that material. That responsibility was clearly abdicated in this case.

What the paper proves, and what it doesn’t

The paper suggests that PhotoDNA’s effective sensitivity for adversarial actors is near-zero. Anyone who reads this paper and wants to evade detection can do so in seconds. That matters.

What it doesn’t show is what Breyer claims — what cryptographers call a class break, an attack invalidating hash-matching as a detection approach entirely. That would fit the “nail in the coffin” framing. This paper shows only that one specific implementation can be defeated. A cryptographically stronger perceptual hash function — which this paper makes urgently necessary — could repair the vulnerability without touching anything else.

Better hash function —> new attack —> better function —> newer attack… This sort of arms race is native to the cryptography ecosystem and has been for approximately 4,000 years.

The numbers

Where Deryck et al connects to my framework for the causal effects of mass screenings for low-prevalence problems is through the strategy pathway, not the classification pathway.

Here is what one possible causal diagram of likely downstream effects looks like: policy adversaries publish evasion methods → dedicated criminal actors learn and adopt them → effective sensitivity for the most harmful CSAM sharers collapses → detection burden falls entirely on casual and ignorant offenders → net child protection outcomes worsen.

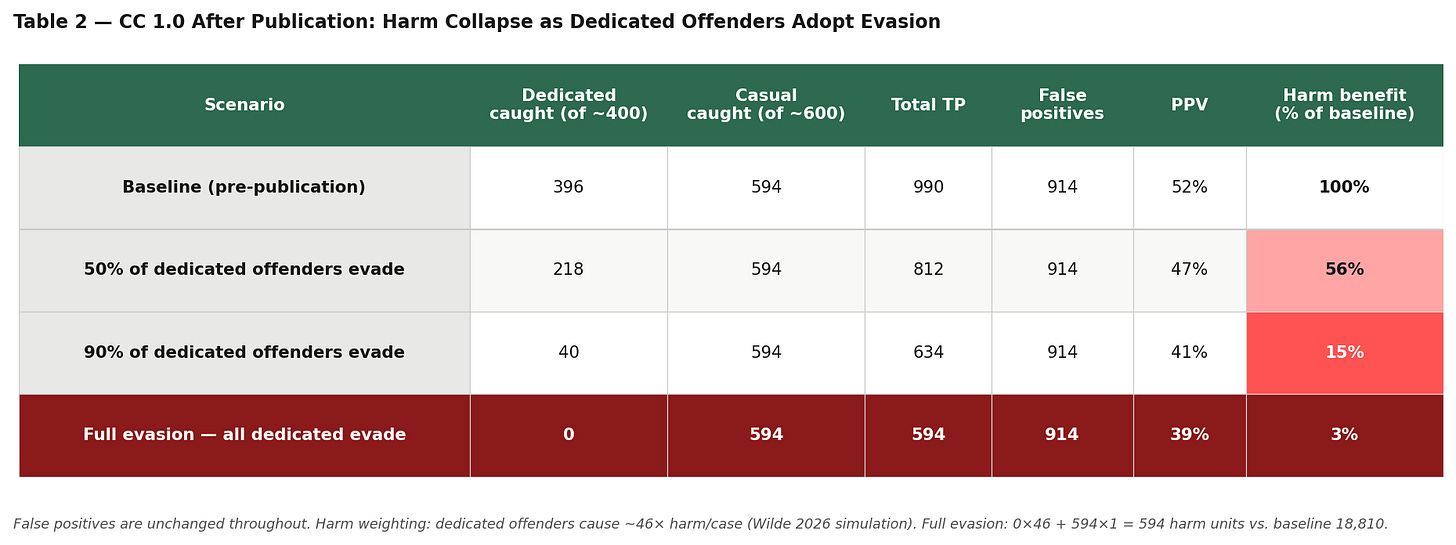

In my preprint, I modeled dedicated versus casual offender populations separately. Across the full parameter space, dedicated offenders dominate harm-weighted outcomes by a factor of 46 to 1. They are also, by definition, the people most likely to find and implement published evasion techniques. The policy adversaries have just handed them a gift.

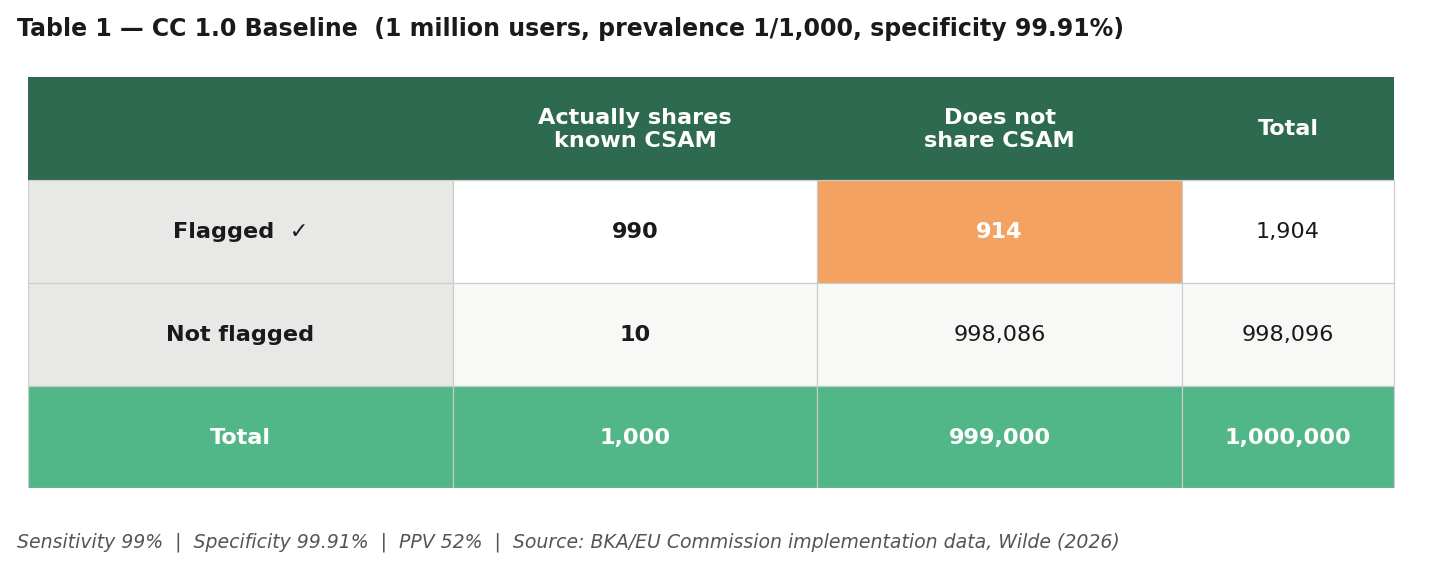

The table above shows estimated hypothetical outcomes for Chat Control 1.0 — existing EU mass scanning with PhotoDNA — before Deryck et al’s publication: roughly 1,900 flagged cases per million users, with about 914 false alarms and 990 real cases caught. A false alarm means a human reviewer looks at images, presumably finds nothing criminal, and closes the case. It’s a resource cost and a privacy violation — not a framing, because the evidence for a crime isn’t there when someone looks. PPV of 52% is worse than I originally estimated (my prior model assumed higher specificity), but manageable as a sorting task. It’s been tried and doesn’t seem to overwhelm investigative resources. So, from one possible child safety perspective, it worked.

The paper claims that false positives could be used to “incriminate” or “frame” innocent users. This framing warrants scrutiny.

A false positive under CC 1.0 means an automated hash match triggers human review. A trained analyst looks at the flagged images, presumably finds nothing criminal, and closes the case.

For the “framing” logic to hold, you would need: (1) the hash match to be treated as proof rather than a prompt for investigation (which is empirically not what happens in the current system); (2) investigators to then fabricate or ignore exculpatory evidence; (3) prosecutors to proceed anyway; (4) judges to collude in the miscarriage of justice; and (5) no other witnesses to the corruption (e.g., lawyers, court clerks) to tip off the media or otherwise blow the whistle.

That’s not a technical vulnerability that this attack creates, as the authors claim. Rather, it’s a theory of coordinated institutional corruption across multiple independent actors in modern EU member states which is not supported by evidence. It does not reflect a credible threat model for Germany, France, or the Netherlands in 2026.

Do the paper’s authors know how CSAM investigations actually work? If so, their framing would seem to intentionally inflate the false-positive harm to make that attack sound substantially more consequential than it is. If not, why didn’t they try to find out before publishing this paper?

The real harms of false positives are resource reallocation: they waste investigative resources, violate privacy during review, and potentially involve costs for the person flagged (if they find out). Those are serious costs. “Framing” is not the right word for them. Framing assumes bad intent.

The most plausible place in this chain where an actor has bad intent is dedicated attackers using false-positive attacks to overwhelm investigative resources. That’s not framing; it’s sabotage. But calling a cryptographic attack you publish “sabotage” doesn’t have quite the same civil libertarian ring.

Table 2 (above) contains hypothetical estimated outcomes illustrating what happens along the strategy pathway as dedicated offenders — the people producing and distributing organized child sexual abuse material — adopt the published evasion methods. The false positive count stays constant: the same innocent users still get flagged, the same investigative burden still falls on human reviewers to sort out true cases from false alarms. But the harm-weighted benefit collapses. Under full adoption, CC 1.0 catches only casual and ignorant offenders while missing essentially all organized abuse.

A system generating the same costs and a fraction of the benefit is not a fixed system. It is a broken one. Broken by this paper.

The arms race they’ve entered

The authors did coordinated disclosure. They told Microsoft first, withheld some technical details, and restricted their code. This is better than nothing.

But this CVD norm was designed for corporate software vulnerabilities, not active child protection policework. This norm was designed for cases where the main threat is from researchers or nation-state actors who respect institutional norms and wait for patches. That is not the threat here. The main threat here is pedophiles. Pedophiles who distribute CSAM, who have no institutional affiliations to protect, who are already looking for evasion techniques, and who now have a peer-reviewed paper with a public URL that Patrick Breyer has put in a press release.

The cryptography community has a long, legitimate tradition of anti-surveillance work. Preneel is a signatory of the Copenhagen Resolution. I am not arguing against that tradition. I am arguing that it has a blind spot that this paper exemplifies: it does not adequately model the harm pathway that runs through criminal actors who benefit from the publication of evasion techniques. Publishing attacks on security infrastructure used to protect children, in order to intervene in a live legislative process, is a choice. It is possible to make a similar policy argument without it. I did.

Microsoft should accelerate patching. Platforms should audit their implementations now, without waiting for a public specification. The EU Commission should treat this vulnerability as an argument for better hash-matching, not as a reason to abandon detection. And the cryptography community should ask whether its disclosure norms, calibrated for corporate software, are appropriately calibrated when the system being attacked is CSAM detection infrastructure.

The researchers found real vulnerabilities. Their disclosure process was real. I still think they got the balance wrong.

We should not accept forced choices such as those between liberty and security without empirical evidence that we have to. There’s not an established evidentiary basis for this choice in this context. But this ambiguity cuts both ways: we don’t have to accept mass surveillance in the interests of child protection without proof that giving up some liberty here gets some security there. And we also don’t have to undermine active police child protection work in the interests of liberty.

Science should serve the public interest. Deryck et al 2026 does not.