Validate Me

On the validation/transportability distinction, hard problems, and perverse incentives

We’re all looking for validation, but scientists are looking for it in a very particular way.

The Kurt Kuenne short film makes the colloquial sense beautiful: a parking attendant who validates parking and validates people — “you’re terrific, you have nice hair, the world is better with you in it.” We watch and we want to be told. We are told too rarely.

Scientists want to be told something else. We want to be told whether our inferences are valid. Did the screening program actually catch real cases, or did it relabel ambiguity as success? Did the algorithm reduce false positives, or did it just push them into a category we conveniently call true? Did the intervention work, or did the population we measured drift?

These are different questions. The first kind of validation makes you feel seen. The second kind asks whether your model corresponds to reality. The first is generous. The second is unsentimental, a perpetual project of critical self-reflection at best, and perhaps the most underrated unsolved problem in the methodology of mass screenings.

The validation/transportability distinction

In current causal inference, the closest thing we have to a real grip on cross-context inference is transportability. In “Causal inference and data fusion in econometrics” (The Econometrics Journal, 28(1), 41–82), Paul Hünermund and Elias Bareinboim formalize this like so: you encode source-target differences as selection nodes in a graph, run do-calculus, and the algorithm tells you whether the causal effect you estimated in one population is identifiable in another. If yes, you can transport. If no, the algorithm tells you what additional data you’d need.

This is beautiful. It is also, however, debatable. The trouble is that the solution (transportability) doesn’t work on quite the same level as the problem (validation).

This is as clean a current statement as I can muster of one corner of a longer conversation: Parts of structural econometrics (Matzkin’s nonparametric identification results being a touchstone; Matzkin 2012, 2013) and Pearl’s causal framework have been circling each other for decades. This circling has often come in the form of arguments about exactly which assumptions get smuggled in where. Pearl’s 2014 Haavelmo paper is the most explicit attempt to bridge the two traditions. Transportability is one of the places that conversation has produced something operational.

But the validation/transportability distinction may itself be an instantiation of the identifiability/indeterminacy distinction I’ve written about before: identifiability is when multiple parameters fit the data and more data could in principle resolve the ambiguity; indeterminacy is when model-reality correspondence is unverifiable in principle, and no quantity of additional data resolves it. Transportability lives in the identifiability register. Validation, where it works, lives there too. Validation, where it fails — Chat Control in its broadest sense, polygraphs as psychic X-rays, behavioral intent screening in general — lives in the indeterminacy register. And whether a given validation problem is solved in principle may itself be an indeterminate question. That’s part of why mass screening proponents and critics often talk past each other.

Transportability assumes the source-population effect is itself identifiable. It assumes you have an answer sheet somewhere. Then asks if we can logically say it ports.

The validation problem is a higher-level question: is there an answer sheet in principle? Can you, anywhere in the system, observe whether the classification was correct?

This is also an identification problem! But if this identification problem is unsolvable for the case we really care about, then we don’t need to do do-calculus to find out if another answer ports. It doesn’t. If the do-calculus says it does, we’ve done something wrong.

Experts and analysts alike have disincentives to conclude that the validation problem itself is unsolvable, or even to acknowledge it as unsolved.

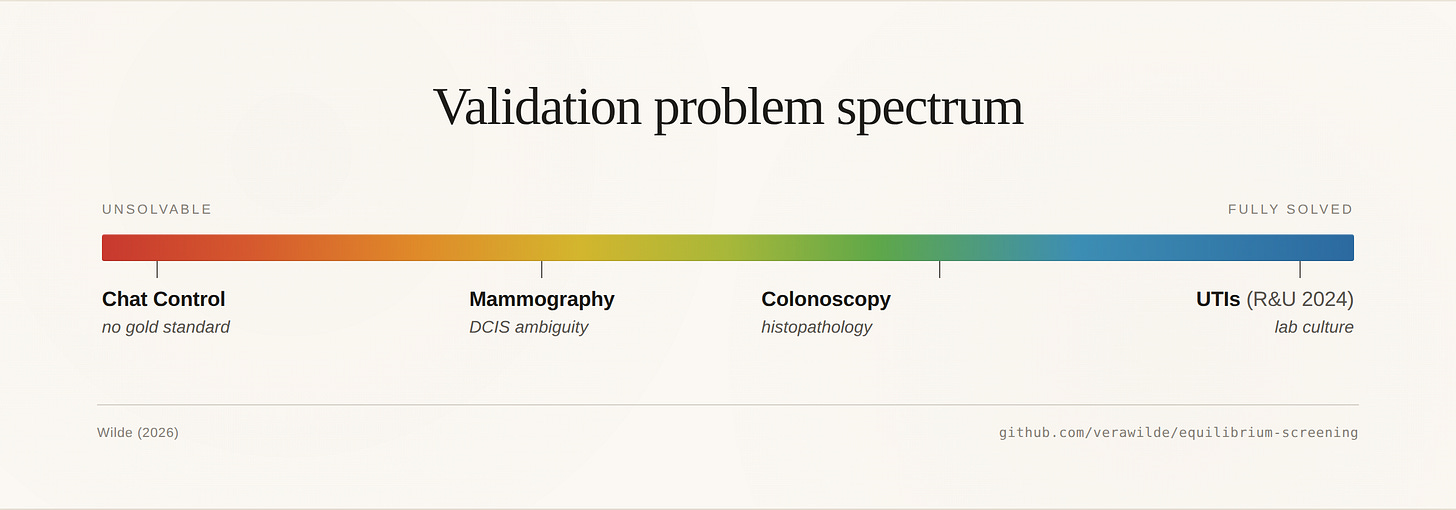

The validation problem spectrum

Here is the slide I keep coming back to, from my Rarity Roulette talk:

Chat Control sits on one end because the validation problem is unsolved in principle. There is not necessarily a future moment at which the system can confirm whether most flagged ambiguous communications in mass surveillance actually constituted cybercriminal badness (e.g., criminal grooming or terrorism financing). Even if we somehow got perfect downstream criminal-justice data, the screening itself changes who communicates how — the target quantity is partly constituted by the intervention measuring it. There is no answer sheet, and there cannot be one without abandoning the privacy logic that justifies the screening in the first place.

(“Chat Control” itself bundles multiple sub-problems with different validation profiles — known-CSAM hash matching is tractable, unknown-content AI scanning is blocked by resource arithmetic at scale, and intent-based classification like grooming detection is a constitutive ambiguous case where we shouldn’t even try. I worked through the known/unknown distinction here; the story could be filled out with this ambiguity portion in a later iteration. The version of Chat Control I’m calling “unresolvable in principle” here is that intent-classification case.)

UTIs in Danish primary care, on the other end — the beautiful special case Ribers and Ullrich (2024) exploit to analyze human-AI complementarity — sit at the fully-solved end. The clinician decides whether to prescribe antibiotics; the lab culture comes back; we see, post hoc and at scale, who actually had a UTI. That’s the answer sheet that makes their complementarity analysis possible.

It’s also why colonoscopy works for a similar move: histopathology eventually classifies the polyp once it’s removed, though we don’t see false negatives the way we do in the UTI case — missed polyps only surface later, as interval cancers or in subsequent screenings. And it’s why mammography mostly doesn’t: DCIS ambiguity contaminates the gold standard; biopsy-confirmed findings include an unknown proportion of cases that should arguably count as false positives.

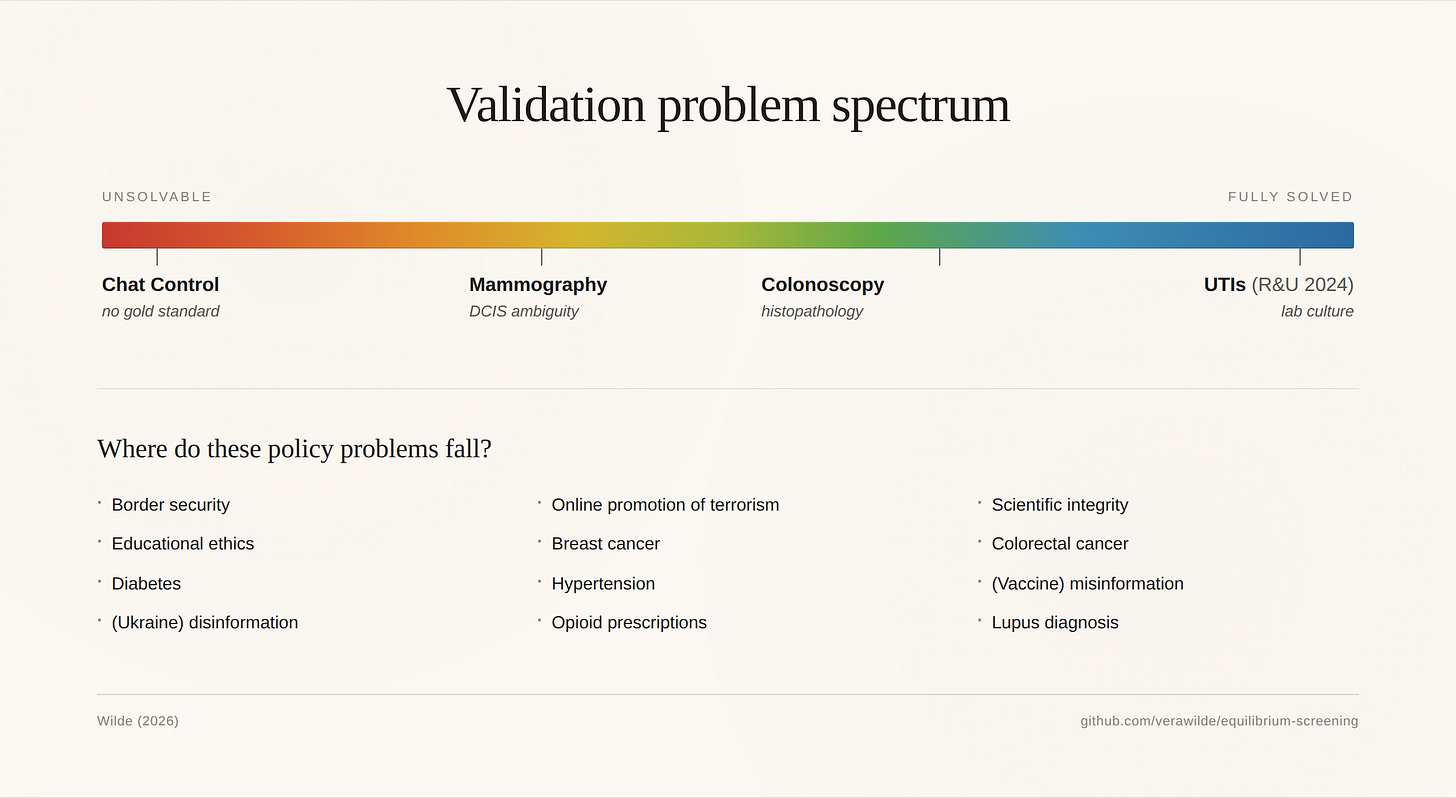

Lots of policy-relevant screening problems sit somewhere on this spectrum. Border security. Educational ethics. Diabetes. (Ukraine / your political hot topic here) disinformation. Online promotion of terrorism. Breast cancer. Hypertension. Opioid prescriptions. Scientific integrity. Colorectal cancer. (Vaccine / abortion / your scientific hot topic here) misinformation. Lupus diagnosis. Where each one falls is a substantive question that determines what methods can do for it. We mostly don’t ask how big or small (quantitatively) and how functionally limiting (qualitatively) the persistence of the validation problem is for our solutions. This should perhaps be the first thing we do.

Most of my current work is pushing the framework toward applications where some validation is eventually possible — AI-assisted screening in medicine being the concrete instance, where the classification pathway has been empirically tested (MASAI) but the strategic pathway question (deskilling, automation bias) is unresolved.

Is the validation problem harder in security or medicine?

Maybe my slide rainbow has it backwards, and security is harder than medicine: With a gun, you can do field experiments in airport security screening and there is no ground truth problem. It’s there or it’s not. With a cell mass, somebody had to decide — at some point, retroactively, never quite finally — when to call this progressive cancer and that one not. There is no God giving us the ground truth on cancer-cell appearance (thanks to Odette Wegwarth for this image). The ambiguity isn’t measurement noise around a true value; it’s that the true value was constituted by classification convention.

The cancer ambiguity is real, and DCIS is the cleanest example: ~80% of DCIS doesn’t progress to invasive disease (Welch et al. 2011; Esserman et al. 2014), and we treat it anyway, and the treatment counts as a “true positive” in screening evaluation. That’s an ugly fact and Odette is right to insist on it. But: cancer progression is observable in principle — at the limit of long, ethically impossible observational follow-up, you would see which cell mass killed someone and which sat there. Behavioral intent in security is not observable in principle. “Deceptive intent” is endogenous to the screening intervention; the screening defines the behavior space partly by existing.

These are different types of unsolvability. Cancer’s validation problem is empirical and ethical — we can’t run the trial that would resolve it. Security’s is constitutive — there is no trial because the construct depends on the test. The cancer case is harder in practice. The security case is harder in principle.

The constitutive case has its own technical literature — partly. Forré and Mooij’s work on σ-separation, and Mooij and Magliacane’s on identification under interventions in non-acyclic graphs, gets at part of this: when the screening intervention creates a feedback loop with the construct it claims to measure, the relevant identification machinery isn’t transportability across acyclic source-target pairs but identification under cyclic structural causal models. I’m not sure those tools resolve the constitutive case, either. And the cyclic SCM literature has its own legibility problems, which is part of why it hasn’t been more widely picked up. But they’re closer to it than the transportability literature, which assumes acyclicity. This is part of what I want to figure out.

I want to draw a Venn diagram for this. But I’m not sure it’s a Venn diagram. Maybe it’s two intersecting axes: constitutive vs empirical, and resolvable vs unresolvable in the relevant time horizon. I’d like a do-calculus answer to whether these collapse to the same thing under a sufficiently rich graph with the right selection nodes. But I’m not sure how to set up the problem. Because I’m not sure how you would know if your answer was right. Is it indeterminate? Or just undetermined (ahem) to me at the moment?

Why econometrics stopped writing about this

One possible reason: it doesn’t pay to write on your blog repeatedly that we can’t solve the validation problem to answer questions about causality that we’d really like to answer. (Or, rather, it pays approximately 11 euros a month.)

The transportability literature is rigorous and elegant and produces clean theorems. The validation problem mostly produces “this is harder than the published methods admit” — which is a less satisfying paper. So we get a generation of work that takes validation as solved when it isn’t, and reasons carefully about transport across contexts where the source effect is itself a classification artifact.

There’s also a third possibility that doesn’t depend on either the framework working as-is or being extended: if we can’t get ground truth, we might still characterize how dispersed expert priors are about the construct, and use that dispersion as a measure of how identifiable the construct really is from the data we can see. I’ve written previously about expert prior elicitation; Mikkola, Vehtari, Klami and colleagues’ 2024 Bayesian Analysis review is (afaik) the best statement of where we are, which is “still fairly far from having usable prior elicitation tools” (lolsob). For many of the same reasons the validation problem is a problem. But it’s a possible empirical direction forward, which is all we can ask for sometimes in science. And the methodological breadth and depth it would require — causal inference, expert elicitation, decision theory, organizational data access — implicates the need for collaborative science. With good collaborators, that’s a very exciting prospect.

If do-calculus can tell us when validation is structurally unidentifiable, cleanly, then we have a real result. If it doesn’t — if the framework just shrugs and says “you’d need different data” — then we have a different result, which is that the framework needs an extension to handle constitutive validation.

Either is interesting. Both would help. The first step in either case is asking whether the answer sheet exists, and how to try to engineer one — if possible — if it doesn’t.

As Stephen Fienberg said in 2009: “They said, ‘So how would you validate this?’ and I said ‘I don’t know.’ ”